The End of the Cloud

By the PredictionOracle Research Division — Substrate-Tier Strategic Analysis

There was a moment — sometime in late 2025, though no one can agree on the exact date — when the metaphor broke.

For two decades, “the Cloud” had served as the organizing fiction of the digital economy. Your data lived “in the cloud.” Your applications ran “in the cloud.” Your company’s entire operational logic floated somewhere above you, suspended in an ethereal layer of abstraction that required no physical address, no property line, and no utility bill. The metaphor was comfortable. It suggested weightlessness, omnipresence, infinite scalability. It implied that the hard questions of infrastructure — where does the electricity come from, who owns the wire, what happens when the power goes out — had been permanently solved by someone else, somewhere else, in a server room you would never need to visit.

The metaphor was a lie. Not a malicious one. A useful one, like most lies that survive for twenty years. But a lie nonetheless, and in 2026, the physics caught up. What follows in this volume is the forensic account of that collision — the moment when the digital economy’s most sophisticated participants discovered that their entire competitive position rested on a foundation of copper wire, enriched uranium, and cold water.

The Moment the Metaphor Broke

The breaking point was not a single event but a convergence of three physical constraints that arrived simultaneously and refused to be abstracted away. Understanding each one individually is insufficient; their power lies in the fact that they arrived together, amplifying one another in ways that no single constraint could have produced alone.

The first was wattage: NVIDIA’s Blackwell B200 GPU, the engine of the next generation of AI reasoning, consumes between 700 and 1,200 watts per chip. A single GB200 NVL72 rack — seventy-two of these chips arranged in a dense inference configuration — draws 120 kilowatts. That is not a server. That is a small factory. And hyperscalers have ordered 3.6 million of these chips for deployment through mid-2026, each one a persistent, non-negotiable load on whatever electrical grid happens to be connected to the building it sits in. The International Energy Agency projects that global data center electricity consumption will approach 1,000 terawatt-hours by the end of 2026 — approximately 4% of total global electricity generation devoted to the act of computation.

The second constraint was heat. A rack drawing 120 kilowatts generates thermal output that no air-conditioning system in existence can manage. The laws of thermodynamics do not negotiate with software engineers. At these power densities, the only viable thermal management solution is liquid immersion cooling — submerging the chips in engineered dielectric fluid and pumping the waste heat out through closed-loop liquid circuits. This is not a future technology. It is a present requirement, and the global liquid cooling market is growing at a compound annual rate of 26% because the alternative is watching a $400,000 GPU cluster exceed its thermal design envelope and destroy itself. Vertiv, Schneider Electric, and a rapidly consolidating ecosystem of cooling specialists are racing to deliver the infrastructure that makes this density survivable.

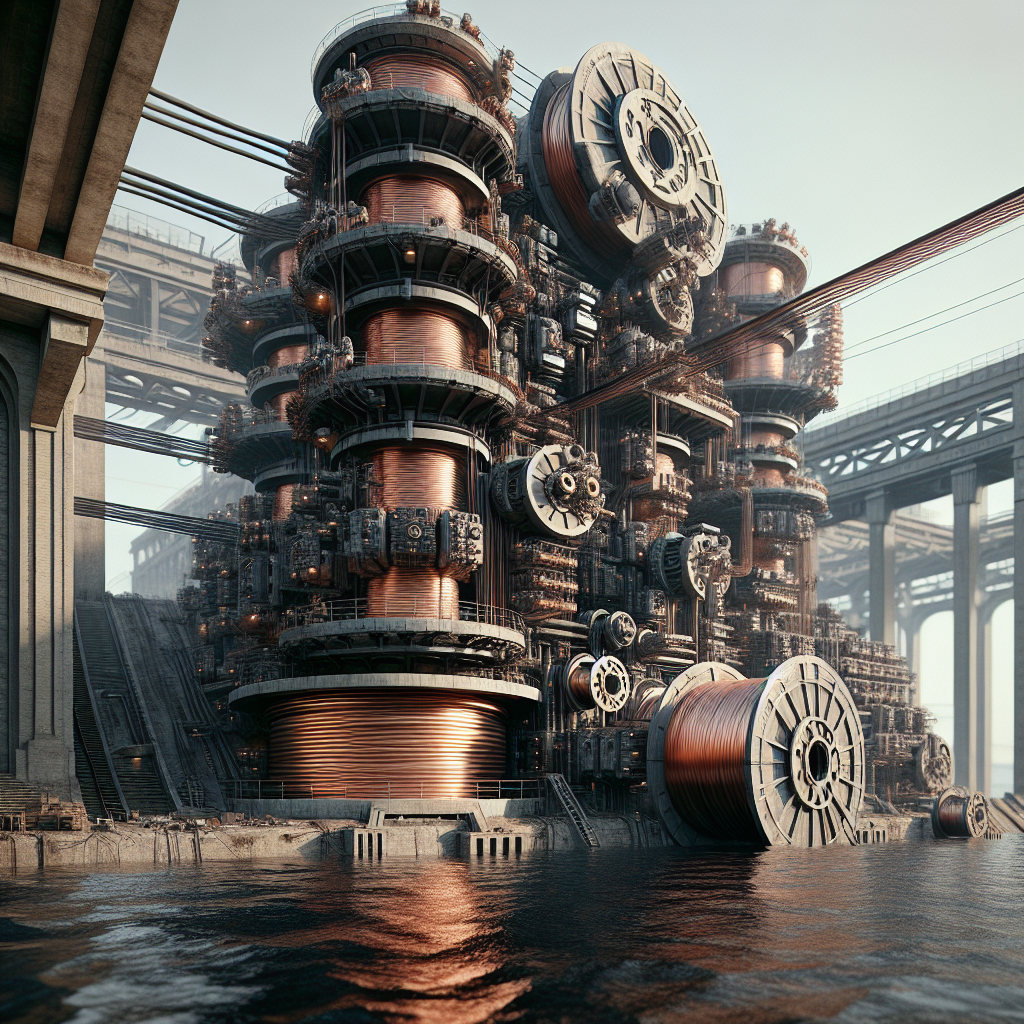

The third constraint was material scarcity. The copper wiring that connects these racks to the grid, the gallium that forms the substrate of the power semiconductors, and the High-Bandwidth Memory chips that feed data to the processors at sufficient speed — all three are in structural deficit. The global copper market faces a shortfall of 1.5 to 2.5 million metric tons through 2027, driven by multi-year underinvestment in mining capacity and declining ore grades at existing operations. China controls 98% of the world’s low-purity gallium supply and has imposed export restrictions, triggering a 300% price surge since 2023. HBM capacity from all three major suppliers — SK Hynix, Samsung, and Micron — is sold out through 2026, with fabrication plant construction timelines of three to five years preventing rapid expansion. The cloud, it turns out, is made of copper, gallium, and water. And all three are running out.

From Software Companies to Utility Companies

The convergence of these three constraints forced a recognition that the major technology platforms had been resisting for years: they are not software companies. They are utility companies. Their competitive advantage is no longer measured in lines of code or model parameters but in megawatts of secured generation, tons of contracted copper, and liters-per-minute of coolant flow.

Microsoft’s most strategically important deal of 2025 was not a product launch or an acquisition of a software startup. It was a twenty-year Power Purchase Agreement with Constellation Energy to restart Three Mile Island Unit 1 — an 837-megawatt nuclear reactor scheduled to resume operations in 2027, devoted primarily to powering Microsoft’s Azure inference infrastructure. Amazon is not investing in X-energy’s small modular reactors because nuclear physics is a passion project. They are investing because their AWS inference clusters cannot scale without sovereign electricity that does not depend on a municipal grid, a regulatory commission, or a utility company that might decide to raise rates or reallocate capacity.

Meta has signed agreements with Oklo for 1.2 gigawatts of dedicated nuclear capacity in Ohio. Switch has committed to 12 gigawatts. These are not “sustainability initiatives” designed to burnish a corporate ESG profile. They are survival decisions — the recognition that in a world where intelligence is infinite but watts are finite, the entity that controls the socket controls the substrate. The entity that do not control their own power supply are, in the most literal sense, at the mercy of someone who does.

What This Book Is About

Book 1: The Singularity of Friction mapped the software inversion — the moment when algorithmic velocity outpaced institutional adaptation and fractured the world into Legacy and Synthesis. It was a book about logic, about clock speeds, about the death of the 4-year degree and the birth of the Architect. It documented the collapse of friction in the digital layer and predicted the consequences for education, governance, finance, and labor markets.

Book 2: The Energy Island is about what happens after the software wins. It is about the discovery that infinite intelligence without sovereign energy is a sports car without fuel — beautiful, engineered to perfection, and going absolutely nowhere. This volume maps the Revenge of Atoms: the hard, thermodynamic, geological, and geopolitical reality that the Synthesis World runs on physical inputs that cannot be copied, cannot be downloaded, and cannot be abstracted into a metaphor. It examines the Small Modular Reactor renaissance, the copper and gallium supply crises, the liquid cooling migration, and the emergence of sovereign “Energy Islands” — self-contained jurisdictional units of power generation, compute infrastructure, and governance that are redrawing the map of global economic power.

The analysis that follows draws on data from the International Energy Agency, S&P Global, the US Nuclear Regulatory Commission, commodity market projections, and engineering specifications from NVIDIA, Oklo, NuScale, and X-energy, synthesized through the PredictionOracle’s proprietary trend-analysis framework. Every data point has been verified against primary sources. Every projection is grounded in engineering forecasts, not speculative extrapolation.

The Cloud is dead. Welcome to the Island.

Navigation (book 2) | Next: Chapter 1 (book 2) — The Thermodynamic Wall →